How HCI went from punch cards to pixels, and why the next interface has no screen at all.

There’s a moment in every discipline where the practitioners confuse the tools for the work.

Surgeons aren’t defined by scalpels. Architects aren’t defined by blueprints. But somewhere in the last two decades, an entire generation of designers became defined by screens. By pixels. By the distance between a thumb and a button.

Where interfaces came from

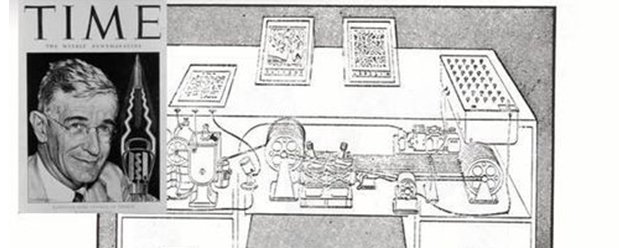

Vannevar Bush wrote “As We May Think” in 1945, describing a machine called the Memex, a desk-sized device that would let a scientist store, retrieve, and link all their research through associative trails. Not menus. Not dropdowns. Trails. Connections. Thought made navigable.

Bush never used the word “interface.” He didn’t have to. The Memex wasn’t about the machine. It was about extending human cognition.

Twenty-three years later, Doug Engelbart stood in front of a thousand engineers in San Francisco and showed them the future: a mouse, hypertext, real-time collaboration, windowed displays, video conferencing. All in 1968. What people forget about that demo is his intent. He wasn’t trying to make computers easy to use. He was trying to augment human intellect. His framework was literally called “Augmenting Human Intellect: A Conceptual Framework.” The mouse was incidental. The mission was amplification.

We kept the mouse. We forgot the mission.

How screens took over

Xerox PARC took Engelbart’s ideas and made them visual. Alan Kay’s team built the Alto, the first computer with a desktop metaphor: icons, windows, menus. Then Steve Jobs saw it, and the Macintosh happened.

The Macintosh democratized computing. It brought graphical interfaces to millions. That mattered. But it also locked in a paradigm: the human must see and click. The human must navigate. The human must figure out where things are.

For 40 years, we optimized within that box. Every wireframe. Every user flow. Every A/B test on button color. Every design system. Every Figma file. All of it built on one assumption: a human is staring at a screen, trying to find the next thing to tap.

The iPhone in 2007 didn’t change the paradigm. It shrank it. Same model, human sees, human taps, but now the screen fit in your pocket. Mobile design became the dominant design discipline. Responsive layouts. Thumb zones. Bottom navigation. An entire profession organized itself around the geometry of a 6-inch rectangle.

What design work actually became

Most of what UX designers do today is not design. It’s arrangement.

Moving components around a layout. Choosing between a modal and a bottom sheet. Debating whether the CTA should be blue or green. Negotiating with product managers about where the settings icon goes.

This isn’t augmenting human intellect. This is interior decoration for software.

The real design problems (what should this product do, who is it for, what happens when it fails, what does the system need to understand about the user that the user can’t articulate) got outsourced to “product” or “strategy” or “research,” and the designer became the person who makes it look right in Figma.

Jakob Nielsen argued in 2025 that AI collapses the interface into intent. Users declare outcomes instead of manipulating elements. The screen stops being the product. The outcome is the product. He’s right, though the reason is more structural than most people realize.

Why screens are disappearing

The screens aren’t going away because AI is trendy. They’re going away because the primary user of a growing number of systems is not a person. It’s another piece of software.

When you build a traditional application, the user has eyes, fingers, spatial memory, pattern recognition. You design for their visual system, their motor skills, their attention span. When you build an agentic system, the primary “user” is an autonomous process that needs to make a decision, call an API, parse a response, and act, all without ever rendering a pixel.

This is already happening. Customer support agents are resolving tickets end-to-end without a human seeing an interface. Investigation systems are querying databases, cross-referencing records, and generating reports with no dashboard involved. Compliance agents are monitoring transactions, flagging anomalies, and filing reports through APIs. Coding agents are reading repositories, writing pull requests, and deploying changes through terminal commands.

In all of these cases, the “interface” isn’t a screen. It’s a contract. An API schema. A structured prompt. A set of return codes. The new interface is invisible, and that changes what it means to be a designer.

What died and what didn’t

UX/UI as a screen-based pixel-pushing discipline is dead. The era where a designer’s primary output is a Figma mockup of a screen layout is ending, and quickly.

What’s not dead is the stuff that was always underneath the pixels. Understanding human needs, because agents still serve humans and someone has to understand what the human actually wants, not just what they click on. Systems thinking, because agent architectures are complex and someone has to design the logic of how agents coordinate, escalate, fail gracefully, and explain themselves. Trust design, because when an autonomous agent moves your money or sends an email on your behalf, the human needs to trust it, and that may be the hardest design problem the field has ever faced. Ethical architecture, because agents can cause real harm at scale, and someone has to decide what the agent is not allowed to do.

The discipline isn’t dead. The artifact is dead.

What to do about it

Stop thinking in screens. Your deliverable is no longer a mockup. It’s a system map. How do agents interact with each other? How do they interact with humans? What happens when agent A disagrees with agent B? What does the human see when they need to intervene? Learn to map information flows, decision trees, and failure modes. That’s the new wireframe.

Learn to design for two users at once. Every agentic product has at least two: the human who benefits and the agent that executes. The human needs clarity, trust, and control. The agent needs structured data, clear contracts, and deterministic interfaces. Nobody is good at this yet.

Become literate in APIs and system architecture. You don’t need to become an engineer. But you need to understand what an API contract is, what structured data looks like, what happens when a system call fails. Screens were your medium. Structured interactions are your new one.

Study trust instead of aesthetics. When should an agent ask for permission versus act on its own? How do you communicate uncertainty? How do you let a human override an agent’s decision without breaking the system? Read about human factors in aviation. Read about automation trust in nuclear systems. Read about decision support in medicine. The answers are in incident reports, not on Dribbble.

Get comfortable with invisible work. The best agent-era design will be design nobody sees. The system just works. The right thing happens. Your best work won’t screenshot well. It won’t land on Behance. It’ll live in the architecture of a system that quietly does the right thing, and the only evidence it exists is the absence of friction.

Design the failure modes. In traditional UX, the happy path gets 90% of the attention. In agent systems, the failure path is the product. What happens when the agent is wrong? What happens when it’s uncertain? What happens when two agents conflict? The designer who maps every failure mode is worth ten designers who can make a perfect happy-path flow.

Let go of Figma as identity. Figma is a tool, not a practice. If it helps you design system behavior, use it. If it doesn’t, learn the tools that do: system diagrams, state machines, decision matrices, behavior specifications.

Where this all leads

HCI started as a discipline about making humans more capable through technology. Somewhere along the way, it became a discipline about making technology easier to look at.

Vannevar Bush didn’t dream of pixel-perfect mockups. Engelbart didn’t augment human intellect with component libraries. Alan Kay didn’t invent object-oriented programming so designers could argue about border-radius values.

The agent era brings us back to the original question: how do we use computation to amplify human capability? That’s a better question than “should the button be blue or green.” It’s a harder question. And it’s the one that matters now.

The interface as we knew it is dead. The rectangle, the modal, the dropdown, the toggle, that era is closing. What’s opening is less tidy, less visual, and more consequential. The first designers at Xerox PARC didn’t have a playbook either. They had a set of problems nobody had solved before, and they built the discipline as they went.