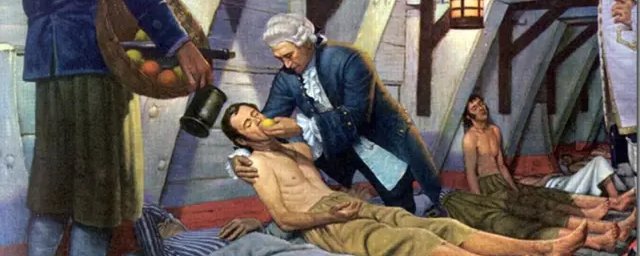

May 1747, Bay of Biscay. James Lind had a problem. His sailors were falling apart—literally. Teeth loosening, wounds reopening, skin bruising from nothing. Scurvy was killing more British seamen than enemy cannons, and nobody knew why.

Lind wasn’t a genius. He was a ship’s surgeon with twelve sick men and a hunch. So he ran an experiment: same symptoms, same conditions, different treatments. Cider. Seawater. Vinegar. Sulfuric acid. A paste of garlic and mustard. And for two lucky sailors, oranges and lemons.

The citrus group recovered in days. One went back to work. The others kept dying.

Medicine calls this the first clinical trial. Lind had invented controlled comparison on a wooden boat.

The Royal Navy ignored him for 42 years.

Tens of thousands of sailors died in the interim. The data existed. The institution couldn’t metabolize it.

The System That Was Supposed to Fix This

Modern clinical trials emerged as medicine’s answer to exactly this kind of institutional failure. Randomization, blinding, statistical power, phase gates; a machine designed to force truth through bureaucracy.

It worked. Polio. Statins. ACE inhibitors. For diseases that hit large populations uniformly, with drugs that did one thing predictably, the system delivered.

Then biology got complicated.

The Pill Framework Meets the Peptide Era

The clinical trial apparatus was built for aspirin. For molecules designed to bludgeon a single pathway in millions of similar bodies.

That’s not what’s coming next.

Peptides, biologics, signaling fragments: these compounds don’t override biology. They negotiate with it. Their effects depend on context: age, inflammation, sleep, metabolic state, time of day. Dose response curves get weird. The same compound helps one patient and does nothing for another.

We’re still testing them like they’re cholesterol drugs.

Large homogeneous cohorts. Fixed endpoints. Rigid timelines. The entire framework optimized for a single question—does it work?—when the actual question is for whom, when, and why?

A single Phase III trial can cost tens to hundreds of millions of dollars and take years to complete. The FDA approved 55 new drugs in 2023. Meanwhile, biology keeps generating hypotheses faster than the regulatory pipeline can process them.

The Shadow Trial Network

Here’s what nobody in pharma wants to talk about: the experiments are already happening.

Reddit forums. Longevity clinics. Biohacker Discords. Millions of people running peptide protocols, tracking sleep scores, posting bloodwork, comparing notes. It looks like chaos. It mostly is.

But there’s signal in there. Patterns. Dose ranges that seem to matter. Combinations that work better than monotherapy. Data that exists nowhere in PubMed.

The gap between institutional medicine and grassroots experimentation is now a canyon. One side moves at regulatory speed. The other moves at internet speed. Neither talks to the other.

Lind’s advantage wasn’t genius, it was that he controlled the comparison. The biohacking underground has scale without structure. Outcomes nobody’s synthesizing. Learning that never compounds.

What Lind Actually Proved

The lesson from that ship isn’t about vitamin C. It’s about the relationship between evidence and action.

Lind didn’t know why citrus worked. He didn’t wait for mechanism. He compared outcomes and made a call.

Modern medicine inverted the sequence. We now demand exhaustive mechanistic explanation before behavioral change. Regulators fear false positives more than missed truths. The system optimizes for not being wrong over learning faster.

That made sense when we had crude tools and catastrophic stakes. It makes less sense now.

We have continuous glucose monitors. Wearable HRV. Adaptive trial designs that can shift enrollment based on interim data. Bayesian statistics that update in real time. The technology for trials that learn already exists.

What’s missing is the institutional willingness to use it.

The Actual Choice

Peptides aren’t a niche market. They’re a preview of where medicine is headed; toward compounds that are conditional, contextual, and personalized. Testing them with 1970s methodology isn’t just inefficient. It’s epistemologically wrong.

The alternative isn’t to abandon rigor. It’s to build trials that match the biology: adaptive, stratified, continuous. Trials that treat individual variation as signal instead of noise. Trials that can ask twenty small questions instead of one giant one.

James Lind figured this out with a dozen sailors and some fruit. He didn’t need permission. He needed a comparison group.

The modern research establishment has better tools, better data, and vastly more resources than a ship surgeon in 1747.

It also has a 42-year delay baked into its operating system.

That’s the part worth fixing.