A thirty-year history of telecom surfaces, and why the next billion-dollar platform won’t have a screen.

Imagine a woman in rural Tamil Nadu, sometime around 2003. She earns less in a week than what the phone in her hand cost. The phone is a Nokia 1100. It has a flashlight. A dust-proof case. Snake II. And the ability to send 160 characters to anyone with a SIM card. She’s not browsing. She’s not scrolling. She is talking to her daughter in Chennai. That’s all the phone needs to do, and it does it without complaint.

That phone sold about 250 million units. It is often cited as the best-selling phone model ever made. Not because it was elegant. Not because Nokia spent millions on interface design. Because it was sufficient. Voice and text. Two surfaces. Nothing more.

Here’s the argument I want to make: every meaningful shift in telecom has been a shift in surface. And after thirty years of that surface expanding, growing more complex, more visual, more demanding of your attention, it is now collapsing. Fast. Back toward voice. Back toward text. Back toward two surfaces. Except this time, what sits on the other end is not a person. It’s an intelligence. And the consequences of that are larger than most people in this industry are prepared to admit.

The Original Surface: Voice and 160 Characters

The first SMS was sent on December 3, 1992. Neil Papworth, a 22-year-old software engineer at Sema Group, typed “Merry Christmas” on a computer and sent it across the Vodafone network to Richard Jarvis, who received it on an Orbitel 901 that weighed over two kilograms. Jarvis was at a Christmas party. He showed it to Vodafone’s CEO, who was reportedly unimpressed.

Nobody understood what they were looking at.

For the next decade, the telecom surface was exactly two things: voice calls and short messages. The 2G era was defined by this constraint. Operators made money on minutes and per-message charges. The business model was pure utility. You paid to be connected, and connection meant one of two modes: real-time audio or 160-character bursts of text.

Then came Value Added Services. In India, caller tunes and ringtone services became multi-thousand-crore businesses in the late 2000s. VAS, which bundled ringtones, wallpapers, horoscopes, cricket scores, and joke-of-the-day services, grew to represent roughly 10-14% of operator revenue around that era.

But VAS was still built on the same surface. Text menus. Shortcodes. Dial *123# and navigate a tree of options using your number pad. The interaction model hadn’t changed. The phone was still fundamentally a voice-and-text machine with a small revenue layer of entertainment bolted on top.

What’s remarkable, looking back, is how much economic activity ran through how little interface. Billions of dollars flowing through systems that had no graphics, no color, no animations. Just numbered lists and confirmation prompts. The surface was minimal. The business was massive.

What Jan Chipchase Saw

While the telecom industry counted minutes and messages, a British researcher named Jan Chipchase was doing something unusual at Nokia. He was watching.

Chipchase spent seven years as Nokia’s Principal Scientist, traveling to Uganda, India, China, Ghana, Brazil, and dozens of other countries, studying not what people said they did with phones, but what they actually did. He sat in slums in Mumbai. He visited phone booths in Sao Paulo. He watched barbers in Vietnam. Nokia sent him to shanty towns in three countries to run what they called Open Studios: participatory design sessions where communities with limited infrastructure revealed how they adapted mobile technology to their lives.

What he found upended the standard product design playbook.

In his 2007 TED talk, Chipchase described a universal finding that cut across every culture, gender, and income level he studied. When people leave their home, they perform a check for three objects: keys, money, and mobile phone. That’s the center of gravity. That’s what matters. Everything else is optional.

But in emerging markets, the phone wasn’t just a communication device. It was a financial tool, a literacy workaround, and a social infrastructure. Chipchase documented six distinct modes of shared phone use in Uganda: owners acting as informal ATMs, mediated communication that bypassed literacy barriers, missed-call signaling systems, pooled airtime purchasing, community address books, and step messaging where someone physically carried a message between people. His research on how illiterate users navigated phones influenced designs that sold in the hundreds of millions.

This was design at its most consequential. Not pixels. Not interfaces. Understanding how a farmer in rural East Africa uses a $30 device to participate in an economy that had previously excluded him. Chipchase’s insight was that you design for behavior, not for screens. The phone was not the product. The capability it unlocked was the product.

Nokia understood this better than any company on earth. And then Nokia lost the plot entirely.

USSD: The Invisible Interface That Moved Billions

While Silicon Valley was busy designing icons and drop shadows, something more consequential was happening in Nairobi.

On March 6, 2007, the same year Steve Jobs stood on stage and unveiled the iPhone, Safaricom launched M-Pesa in Kenya. The interface was a SIM-toolkit and USSD-style menu. Navigate with numbers. Send money to a phone number. Withdraw cash from an agent.

No app. No browser. No touchscreen. Just a structured text dialogue on a device that cost less than a meal at a San Francisco restaurant.

Within two weeks, 10,000 people had signed up. Within a decade, M-Pesa was processing transactions at massive scale and serving tens of millions of customers. It expanded far beyond Kenya and became a reference architecture for mobile money globally.

Across Sub-Saharan Africa, mobile money became the primary mechanism for financial inclusion. Not banking apps. Not fintech startups with glossy interfaces. USSD menus. In multiple economies, more people hold mobile money accounts than traditional bank accounts. The total value flowing through these systems is now measured in the trillions of dollars annually, moving millions per minute.

This matters for a reason that most product designers in developed markets have never internalized: the most transformative interface of the 21st century wasn’t graphical. It was a numbered list on a 1.5-inch monochrome screen. The surface was tiny. The impact was enormous.

USSD proved something that Chipchase had been arguing all along. The sophistication of a transaction has nothing to do with the sophistication of the interface. You can move money, pay bills, buy airtime, and save for your children’s school fees through a system that looks, to a Western designer, like something from 1995. What matters is that the system understands what you want and lets you do it with minimal friction. The surface is irrelevant. The capability is everything.

The Keyboard Era and the OS Wars Begin

Then BlackBerry happened.

BBM launched in 2005, and for a stretch of about seven years, it defined what mobile communication looked like for urban professionals, teenagers in Jakarta, and anyone who wanted to feel important enough to have a blinking red light on their phone. At its peak in 2012, BBM had 80 million subscribers. The phones had physical keyboards, trackballs, and a culture built around instant, always-on messaging that predated WhatsApp by years.

But underneath the hardware, something else was shifting. The operating system was becoming the real battleground.

Nokia ran Symbian. In 2007, Symbian held roughly 64% of the global smartphone OS market. Nokia wasn’t just winning. Nokia was the market. But Symbian was a mess under the hood: an operating system designed for the constraints of the early 2000s, being stretched to handle the demands of 2008. Nokia’s engineers knew this. Internally, a team had been building Maemo, a Linux-based OS that first appeared on the N770 tablet in 2005. By the time the N900 launched in 2009, Maemo was on its fourth generation. It was good. It was promising. And it was being sabotaged by Nokia’s own Symbian executives, who saw it as a threat and blocked the Maemo team from adding phone functionality to their devices.

This is the part that never makes it into the business school case studies. Nokia didn’t lose to Apple because Apple had better technology. Nokia lost because Nokia’s internal politics wouldn’t allow its best technology to ship.

Then it got worse. Nokia partnered with Intel to merge Maemo with Intel’s Moblin into MeeGo. The result was an interoperability nightmare. Intel pushed x86 processors when the mobile world was moving to ARM. Development tools were incompatible. In February 2011, Stephen Elop, Nokia’s new CEO and a former Microsoft executive, announced that Nokia was abandoning both Symbian and MeeGo in favor of Windows Phone. Symbian, the OS that had held a majority share four years earlier, was now a footnote in a press release.

The MeeGo team, freed from corporate politics by the knowledge that they were already dead, shipped the N9 in June 2011.

It was beautiful. It ran well. Reviewers loved it. It was Nokia’s first and last MeeGo device. By 2014, Microsoft had acquired Nokia’s devices division. The OS war was over for Nokia. It had lost not to a competitor, but to itself.

Meanwhile, on the other side of the world, Palm had built webOS. Launched in January 2009 with the Palm Pre, it introduced card-based multitasking and gesture navigation years before either iOS or Android adopted similar concepts. It was ahead of its time and behind on execution. HP bought Palm for $1.2 billion in 2010, announced grand plans to put webOS on everything from laptops to printers, and then abandoned those plans almost immediately. In 2013, LG purchased webOS to power its smart TVs. A mobile operating system designed to replace the iPhone ended up running television menus.

The OS story is important because it reveals how the industry was thinking. Every company wanted to own the surface. The phone was no longer enough. The OS was the platform. The platform was the business. Control the OS, control the apps, control the user. Apple understood this. Google understood this. Nokia, Palm, HP, BlackBerry, and Microsoft Mobile all understood it too. They just couldn’t execute.

And the surface kept growing. From phones to tablets. From tablets to watches. From watches to TVs. The same platform battle replayed on every new rectangle, every new surface. The assumption was always the same: more surfaces, more screens, more real estate for software to live on.

Now that expansion is running into a new reality. Smart TVs and wearables have fragmented ecosystems, inconsistent long-term support by model and vendor, and a developer landscape that often forces teams to build multiple versions of the same experience. The surface kept expanding. The experience kept fragmenting.

The Rectangle Takes Over

The iPhone launched in June 2007 with no app store. It had 16 built-in applications. You could browse the web, play music, check email, and make calls. That was roughly it. The genius wasn’t what it could do. It was the surface itself: a capacitive touchscreen that turned the entire face of the device into an interactive plane.

When the App Store opened on July 10, 2008, it had 500 apps. Within a year, that number passed 70,000. By the time 4G networks rolled out and made high-bandwidth mobile experiences viable, the rectangle had won. Every interaction, every service, every business, was supposed to live inside an app on a 4-to-6-inch screen.

In India, Reliance Jio lit the fuse in September 2016. The mission that Mukesh Ambani articulated was deceptively simple: make data so cheap that sending a gigabyte costs less than sending a letter through the post office. Make connectivity a utility, not a luxury. I was fortunate enough to work with the family on delivering that mission, and the scale of what happened still catches me off guard. Within 170 days, 100 million people signed up. Data consumption in the country increased six-fold in six months, to 1.2 billion gigabytes per month. India became the world’s largest mobile data consumer overnight. Today, Jio delivers data at about 15 cents per gigabyte. The US average is around $5. The global average is around $3.50.

Suddenly, everyone had a smartphone. Everyone had data. And the assumption was: now everyone needs apps. Lots of apps. An app for payments. An app for groceries. An app for cab rides. An app for each bank. An app for the government. An app for the railway. An app for ordering gas cylinders.

The average smartphone today has about 80 to 100 apps installed. The average person uses 9 of them in any given day. Over 70% of installed apps are opened less than once a month. Many are never opened again after installation.

Global app downloads peaked and have been declining for several years. The surface expanded to its maximum. And people quietly stopped using most of it.

The Super App Detour

The super app was supposed to solve this. One app to rule them all. Instead of 80 apps cluttering your home screen, you’d have one that did everything: messaging, payments, rides, food delivery, shopping, government services.

WeChat made this work in China. A billion monthly active users. Mini Programs that let third-party developers build lightweight apps inside WeChat’s ecosystem. You could hail a cab, split a dinner bill, pay your electricity, book a doctor’s appointment, and argue with your cousin about politics, all without leaving the app. WeChat became less an application than an operating system for daily life.

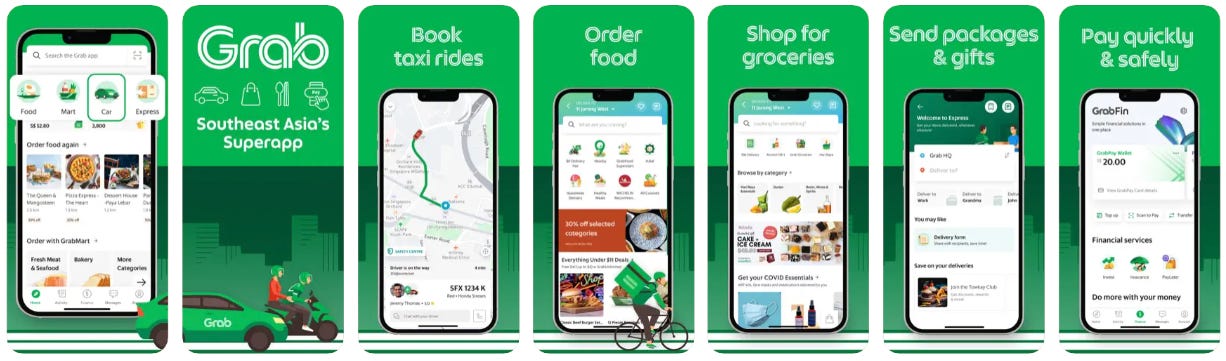

In Southeast Asia, Grab and GoJek followed a similar trajectory. GoJek started in 2010 as a call center connecting motorcycle taxi drivers in Jakarta. By 2021, it had merged with Tokopedia and was driving roughly 2% of Indonesia’s trillion-dollar GDP. Grab, born from a Harvard Business School project, grew from a taxi-booking app in Kuala Lumpur to a financial services platform serving tens of millions of users across multiple countries. Both succeeded by collapsing an entire economy of services into a single surface.

India tried. Repeatedly.

Paytm, flush with the demonetization windfall, tried to become a super app. Tata Neu launched in April 2022, backed by one of India’s oldest and most trusted conglomerates, and was met with technical glitches and indifference. Hike Messenger, which raised hundreds of millions to become India’s WeChat, gave up and unbundled back into separate apps in 2019. Reliance’s MyJio tried to bundle its sprawling ecosystem into a single interface.

None of it stuck.

The diagnosis was structural. India’s consumers had already built loyalty to specialized apps. Swiggy for food. Zomato for restaurants. Flipkart and Amazon for shopping. PhonePe and Google Pay for payments. Ola and Uber for rides. The super app demanded that users abandon tools they already trusted in favor of one that did everything adequately and nothing exceptionally. In a market where the average user had just gotten comfortable navigating individual apps, the super app asked them to navigate an entire operating system within an app. The complexity grew faster than the convenience.

The super app worked in China partly because WeChat got there first, before specialized alternatives had time to establish themselves. In India, the specialized apps had already won their territory. You can’t consolidate what’s already fragmented by choice.

Text 2.0

Here’s where the story bends back on itself.

In November 2022, OpenAI released ChatGPT. The interface was a text box. Type what you want. Get a response. No menus. No navigation. No buttons to find. No settings to configure. Just a blinking cursor and the English language.

Within two months, it had reached 100 million users. By late 2025, ChatGPT was around 700 million weekly active users. By early 2026, reporting put it at 800 million plus weekly active users.

Think about that growth curve in the context of everything that came before. The App Store took years to reach saturation. Super apps spent billions trying to consolidate services. And a text box with a language model behind it became one of the most-used software products in history within three years.

The surface shrank to almost nothing. A chat window. The same interaction pattern as SMS, except the entity on the other end understands context, remembers your previous messages, and can generate a five-page analysis of your business plan or debug your Python script or draft an email to your landlord.

This is text 2.0. The same surface we started with in 1992 when Neil Papworth typed “Merry Christmas.” Except now the 160-character limit is gone, the recipient is a statistical model trained on most of recorded human writing, and the response comes in seconds.

In India, 86% of adults message a business on WhatsApp at least weekly. 62% have made a purchase through a messaging app. In Brazil, a large majority of consumers have contacted a business through WhatsApp. WhatsApp processes over 100 million business messages daily. The global WhatsApp Business API market was worth $2.1 billion in 2024, projected to reach $13.3 billion by 2033.

Commerce is migrating back to text. Customer service is migrating back to text. Even complex financial transactions are migrating back to text. Not because text is primitive, but because text is the lowest-friction interface that preserves full expressiveness. You can say anything in text. You can’t say anything in a dropdown menu.

The USSD menus that moved billions across Sub-Saharan Africa and the ChatGPT window that reached hundreds of millions of weekly users share the same structural insight: when the system on the other end is capable enough, the surface can be minimal. The intelligence moves from the interface to the infrastructure.

The Voice Comes Back

In May 2018, Sundar Pichai stood on stage at Google I/O and played a recording of Google Duplex calling a hair salon to book an appointment. The AI used filler words. It said “um” and “mm-hmm.” The person on the other end had no idea they were talking to a machine.

The audience gasped. Then, largely, nothing happened. Duplex was quietly deployed for a narrow set of tasks: restaurant reservations, appointment bookings, checking holiday hours. It was impressive and irrelevant. A technology demo in search of a market.

Seven years later, the market has arrived.

OpenAI’s Advanced Voice Mode launched in September 2024, turning ChatGPT into something that listens and speaks in real time with emotional nuance. Their Realtime API, which went generally available in August 2025, lets developers build voice-native AI experiences. It supports SIP phone calling. An AI can now pick up an actual phone line and have a conversation.

The restaurant industry alone has spawned a cluster of startups building AI voice agents that answer phones, take orders, make reservations, and handle inquiries 24/7. Restaurants miss 20-40% of incoming calls during peak hours. These agents don’t miss calls. They don’t get flustered during the Friday dinner rush. One platform, Hostie AI, reported a 97% peak-hour answer rate across 500,000 calls.

But restaurant bookings are just the visible edge. The deeper shift is that voice, the original telecom surface, the thing we started with before everything else, is becoming programmable. Not in the IVR sense of “press 1 for billing.” In the sense of: you speak naturally, and something that understands language, context, and intent responds naturally.

We went from voice calls to text messages to USSD menus to mobile browsers to native apps to super apps. Each step added complexity, added surface area, added things to look at and tap on and navigate. And now the trajectory is reversing. The surface is collapsing back to text and voice. The two things we started with.

Except this time, what’s on the other end isn’t a person or a phone tree. It’s an agent.

The Agent Doesn’t Need a Screen

OpenAI released Operator in January 2025: an AI agent that browses the web autonomously, clicking buttons, filling forms, navigating sites on your behalf. By mid 2025, it had been folded into ChatGPT itself. You just tell it what you want, in text or voice, and it goes and does it.

Anthropic built the Model Context Protocol, an open standard they describe as “USB-C for language models,” that lets AI agents plug into any tool, database, or API without custom integration. Their Agent SDK supports subagents that can work in parallel, manage their own context, and operate autonomously for extended periods.

These aren’t chatbots. Chatbots understand what you said and generate a response. Agents understand what you want and go do it. The distinction is the same as the difference between a person who gives you directions and a person who drives you there.

When the agent can act on your behalf, the interface requirements collapse further. You don’t need an app for your airline because the agent can go to the airline’s website, or call their phone line, or hit their API. You don’t need a food delivery app because the agent can handle the ordering. You don’t need a banking app because the agent can execute the transaction through whatever channel the bank exposes.

The 80 apps on your phone were 80 different surfaces, each with its own navigation, its own account, its own notification preferences, its own design language. The agent replaces all of those surfaces with a single conversational layer. Text or voice. The same two modalities we started with in the 2G era.

And here’s where it gets interesting for the operating system narrative. The App Store was Apple’s marketplace. Google Play was Google’s. Both were gated. Both took 30%. Both decided what shipped and what didn’t. The emerging equivalent for agents is something like OpenAI’s Skills framework, or Anthropic’s MCP server ecosystem: registries of capabilities that agents can discover and use on your behalf. The skill becomes the new app. The protocol becomes the new distribution channel. Except nobody has to download anything. Nobody has to learn a new interface. The agent finds the skill, invokes it, and reports back.

If that model matures, the marketplace shifts from apps-that-humans-navigate to skills-that-agents-invoke. The entire presentation layer disappears. The OS, in the traditional sense, stops mattering. What matters is the agent’s ability to connect to services. The platform is the protocol, not the screen.

Skipping the Super App

The super app was an attempt to solve a real problem: fragmentation. Too many apps, too many accounts, too much cognitive overhead. The solution was consolidation. Put everything in one place.

But the super app was still an app. It still required a screen. It still required navigation. It still required the user to understand where things lived inside a complex interface. It solved fragmentation by creating a larger, more complex surface. And in markets like India, where users had already committed to specialized tools, the larger surface was a harder sell than the fragmentation it was trying to fix.

The agent solves the same problem differently. Instead of consolidating services into one interface, it eliminates the need for the user to interact with any interface at all. The agent navigates on your behalf. The consolidation happens in the intelligence layer, not the presentation layer.

This is why I think India, and other markets where super apps failed, might leapfrog directly to agent-mediated interaction. The same way large parts of Africa leapfrogged bank branches entirely and went straight to M-Pesa. The same way India leapfrogged credit cards and went straight to UPI. The same way Jio proved you didn’t need a decade of incremental price reductions to bring a country online. You needed one bold bet and the infrastructure to back it.

The progression isn’t linear. It doesn’t go: apps, then super apps, then agents. It can skip a step. When the infrastructure is ready and the pain is real, markets jump. Ambani is already talking about doing for AI what Jio did for data. That should make people pay attention.

Consider the parallels. M-Pesa succeeded because it met people where they were: on basic phones, with a SIM toolkit and USSD menus, in a market where bank branches were inaccessible. The agent model succeeds for a similar structural reason: it meets people where they are, in a conversation, and handles the complexity behind the scenes. In both cases, the user’s surface is minimal. The system’s capability is what matters.

The Hardware Question Nobody Is Answering Well

If the surface is collapsing back to voice and text, what happens to the hardware?

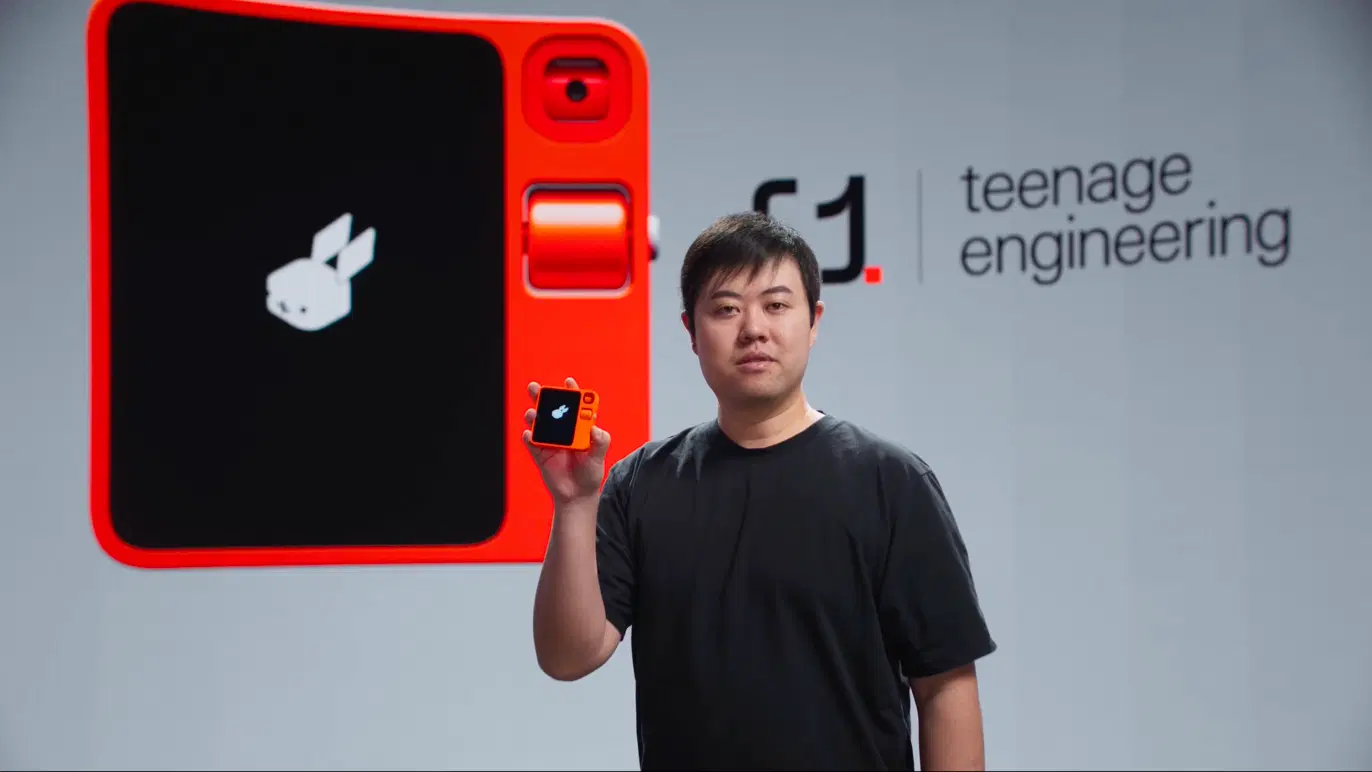

Humane tried to answer this in 2024 with the AI Pin: a $699 screenless device that projected a laser display onto your palm and responded to voice. Rabbit tried with the R1: a $199 handheld with a scroll wheel and a camera.

Both were commercial disasters. Reviewers concluded, fairly, that both devices were apps pretending to be hardware. Neither did anything your phone couldn’t do better.

But the failure wasn’t in the ambition. It was in the timing and the thesis. Both products assumed the agent needed a new physical form factor. That assumption may be wrong. If the agent lives in the cloud, connects through protocols, and communicates through voice and text, it doesn’t need a dedicated device. It needs access to a microphone and a speaker. Your phone has those. Your earbuds have those. Your car has those. Your watch has those.

Or maybe the question is more radical than that.

Chipchase found that people carry three things: keys, money, phone. The phone already absorbed the money. It’s absorbing the keys. What absorbs the phone?

Neuralink has implanted 21 patients as of early 2026. Noland Arbaugh, the first recipient, controls a computer cursor with his thoughts. That’s a brain-computer interface operating as a pointing device. Today. The resolution is crude. The use case is assistive. But the trajectory is clear: the surface is moving from something you hold, to something you wear, to something that runs at the boundary of your nervous system.

I don’t think that’s the near future. I think the near future is more mundane and more interesting: the agent becomes ambient. It lives in your earbuds. It listens when you ask it to. It speaks when you need it to. It acts through protocols and APIs and phone calls and web browsing and whatever other channel gets the job done. You don’t open it. You don’t navigate it. You talk to it. Or you text it. And it handles the rest.

The physical world doesn’t go away. You still walk into a store. You still sit in a restaurant. You still board a flight. But the digital mediation layer between you and those experiences changes. Instead of pulling out a rectangle and tapping through an app to check in for your flight, you just walk to the gate. The agent already checked you in. It already has your boarding pass. It already knows your seat preference and your meal choice and the fact that you always want an aisle.

Is that an interface? Is “no interface” itself a design surface? What does it mean to design for something you can’t see and the user doesn’t consciously interact with?

Where This Leads

I keep coming back to the shape of the arc.

1992: text. 2003: voice and text on devices that sold a quarter billion units. 2007: USSD menus moving billions of dollars. 2007 again: the iPhone. 2008: 500 apps. 2016: a billion gigabytes a month. 2019: super apps succeed in China, fail in India. 2022: a text box reaches 100 million users in two months. 2025: voice agents pick up phone lines. 2026: agents browse the web and invoke skills autonomously.

The surface expanded for twenty years. Phones, tablets, watches, TVs, cars. More screens, more pixels, more things to tap. Now it’s contracting. And the contraction isn’t a regression. It’s a phase change. The intelligence that used to require a visual surface to be useful can now operate without one.

Chipchase understood, two decades ago, that the phone wasn’t the product. The capability was the product. The surface was just the delivery mechanism. Everything that has happened since is the long, expensive, circuitous process of the industry arriving at the same conclusion.

The question I keep turning over is not whether agents replace apps. That feels increasingly obvious. It’s what happens to the spaces in between. The physical spaces. The social rituals. The moments where you currently pull out a phone and break eye contact. If the agent handles the digital layer invisibly, what happens to your attention? Where does it go? Back to the person across the table? Back to the field you’re standing in?

There’s a version of this future that’s dystopian. An ambient intelligence that knows too much, acts without consent, optimizes for engagement rather than wellbeing. We’ve seen that movie. Social media wrote the script.

And there’s a version that looks more like what Bush and Engelbart originally imagined: computation that amplifies human capability without demanding human attention. A system that works for you, not one that works on you.

The woman in Tamil Nadu didn’t need an interface. She needed to hear her daughter’s voice. The Kenyan farmer didn’t need a banking app. He needed to send money to his brother. The surface was never the point.

Thirty years of expanding it taught us what we already knew.